Augmented reality, virtual reality, or even 5G private networks have opened up many possibilities to make life easier for patients, caregivers, and hospital staff. Overview of three solutions where these technologies are applied.

Augmented reality to help with repositioning in a chair

A poorly seated patient in a chair can face several complications: pressure sores, postural disorders, quality of life, etc. Caregivers and the people around them are not necessarily trained to reposition the patient optimally. Moreover, the patient is only sometimes aware of his wrong positioning.

In partnership with the Saint-Hélier cluster in Rennes, b<>com researchers have developed a tool for professional and family caregivers. A depth camera films the patient in his chair, and an algorithm automatically detects the 3D positions of the body parts: torso, heads, arms, legs... Thus, it determines the general posture of the patient and compares it to the one that will have been pre-recorded beforehand, determined by a health professional. Live video feedback then indicates to the caregiver which body parts need to be repositioned to reach the ideal posture.

The advantages of the solution are numerous:

- useful for proper positioning and reassurance about proper positioning, and gaining positioning skills;

- a caregiver who does not know the patient can also reposition him correctly;

- ease of installation and use.

The operation was carried out as part of the Handicap Innovation Territoire project led by Lorient Agglomération and supported by the State under the Innovation Territories component of the Future Investment Program, managed by the Banque des Territoires.

Real-time remote patient education through virtual reality

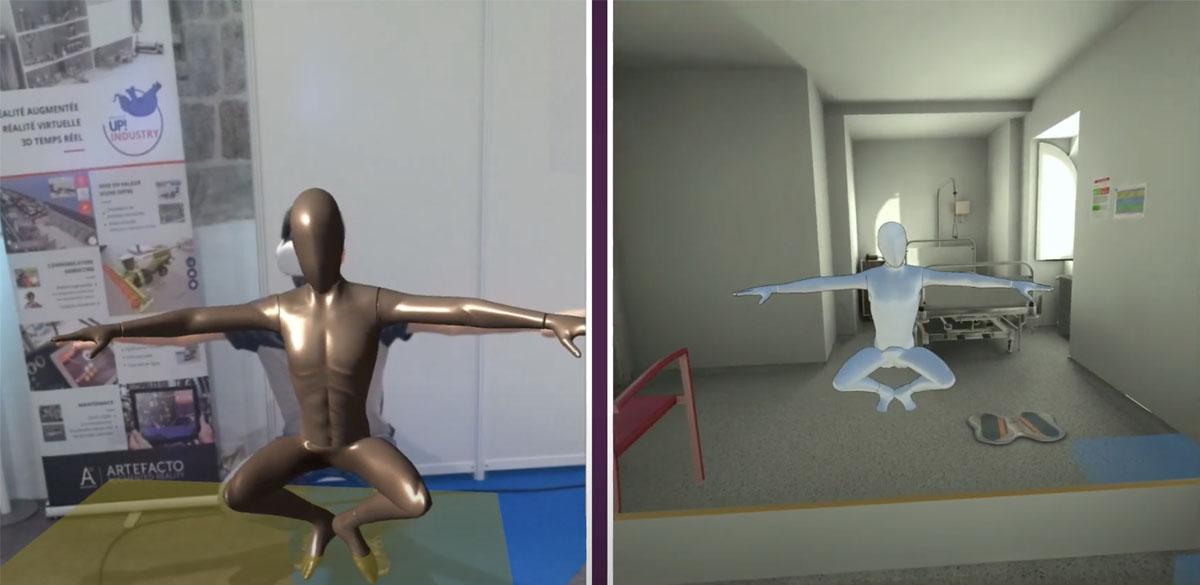

In December 2022, b<>com and its partners Artefacto, Apizee, and the Pôle Saint-Hélier won the SOFMER prize, presenting their project around the deployment of virtual reality tele-rehabilitation scenarios with real-time avatarization of the patient. The French Society of Physical Medicine, and Rehabilitation awarded this prize and rewards technical innovation in re-education, rehabilitation, disability, and services to users, caregivers, and professionals. Artefacto provided the scripting engine, and Apizee provided the secure video dimension with the remote practitioner.

The innovation: a depth camera linked to an algorithm detects the patient's 3D posture in real-time, i.e., his positioning and movements. Equipped with a wireless virtual reality headset, the patient applies alone or accompanied by his practitioner in a videoconference, his rehabilitation program by following the indications that the practitioner will have previously developed. Thanks to posture estimation, the movements and positions captured are applied to a 3D avatar, replicated in the virtual reality helmet, thus recreating a feeling of proprioception in the immersive universe and reducing the effects of cyber sickness. The recorded posture data can then be transmitted to the professional, who can attest to the quality and amplitude of the movements and possibly adapt or correct the program of the next rehabilitation session.

The demarcation point of this project is the real-time avatarization of the entire body without the need for sensors worn on the patient's body.

Combining a private 5G network and augmented reality to create ephemeral resuscitation rooms

Resuscitation departments must respond to crises on an ad hoc basis, leading them to set up temporary resuscitation units in areas not intended for this purpose, such as single rooms along a hallway and without glass. Deploying a private 5G network quickly and efficiently to transmit alarms from monitoring devices and video allows the new medical area to be operational to monitor patient progress in the best conditions.

A 5G private network allows it to be free of connectivity while maintaining reliability and security in the transmission of patient data. 5G also makes it possible to set up video solutions to support the activity of the nursing staff. For example, when patients in intensive care units wake up, they may be surprised by the equipment around them, especially the intubation equipment. A bad reflex or a panic reaction can lead to complications for the patient. To avoid this, our researchers have developed a video-based patient supervision tool. Here too, a camera connected to an algorithm detects the position and movements of the head, arms, and hands. If the patient shows signs of agitation, an alert in the supervision room will warn the staff so that they can intervene quickly and prevent the patient from becoming unconscious. The focus is on the accuracy and relevance of the alarms to reduce the noise and visual pollution already present in the services.

This innovation is part of the "Engage 5G and beyond" project, funded by the France Relance 2030 program and led by b<>com, with partners Orange, CHU de Rennes, Eurecom, EDF, Nokia Bell Labs, and the Image et Réseaux cluster. Its objective is to build and operate a network of open, national, and sovereign platforms, offering services for 5G experimentation applied to healthcare, energy, and industry 4.0.